|

My library of choice when it comes to machine learning is sci-kit learn. How should you use linear regression?Īs mentioned earlier in this post, I will also show you how to implement the same concept with a tried and tested library. Thanks to the stats package from Scipy, it is very straight forward to do.įor the complete working example have a look at the simple_lr.py on my github.

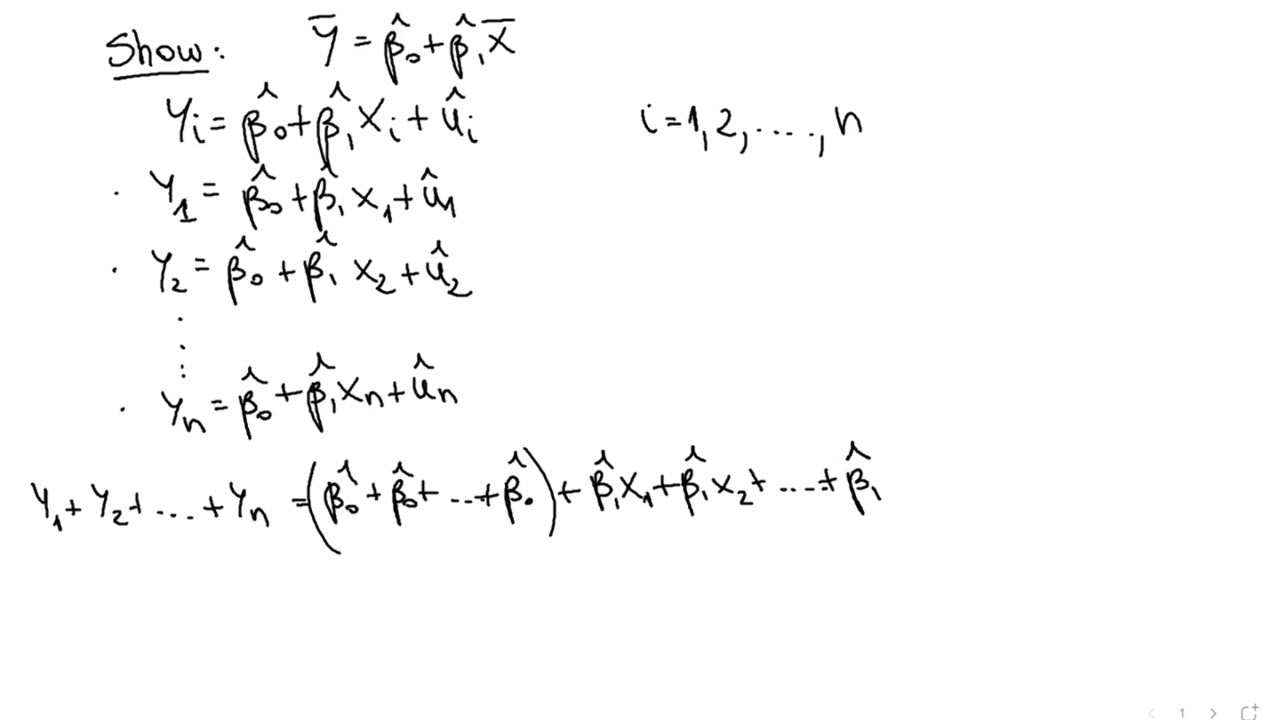

Lastly, the plot method creates a nice graph showing the regression line with a 95% confidence interval. Plt.scatter(X, y, color='black') # actual values Se_y_mean = self._squared_error(y, y_line)Ĭonf = norm.interval(0.95, loc=np.mean(yhat), scale=yhat.std()) """ calculate coefficient of determination """ Return self.b0 + self.b1 * _squared_error(y, yhat) -> np.array: """ predict regression line using exiting model """ Strictly speaking, this isn’t required but it is good practice. Creating a simple linear model classįirstly, the class is initialized with zero values for the required components. The class above doesn’t have a predict method, I will cover this shortly. Self.b0 = self.ybar - (self.b1 * self.xbar) Self.b1 = np.sum((self.X - self.xbar) * (self.y - self.ybar)) / np.sum(np.power(self.X - self.xbar, 2)) If you need a refresher on how this is calculated, I’ve found a great article which explains this in detail.įollowing on from the equation of a line, the simple linear algorithm is very similar.įrom sklearn.datasets import make_regressionĭef fit(self, features: np.array, target: np.array): Y = how far up the line is drawn in the y axis The first algorithm you will need to understand is the equation of a straight line. I will show an example using Scikit learn at the end of this article.

There are some very good machine learning libraries which you should use instead. This is precisely what I will be covering in this section.īuilding your own simple linear regression model in Python is straight forward, however I don’t recommend doing so for any production workloads. I believe the best way to learn how something works is to reverse engineer it. Building a Simple Linear Regression model in Python Therefore, when the independent variable increases or decreases it has a direct impact on the dependent variable. The linear regression method suggests that there is a linear relationship between the independent and dependent variable. In both instances regression algorithms were applied to the field of astronomy.

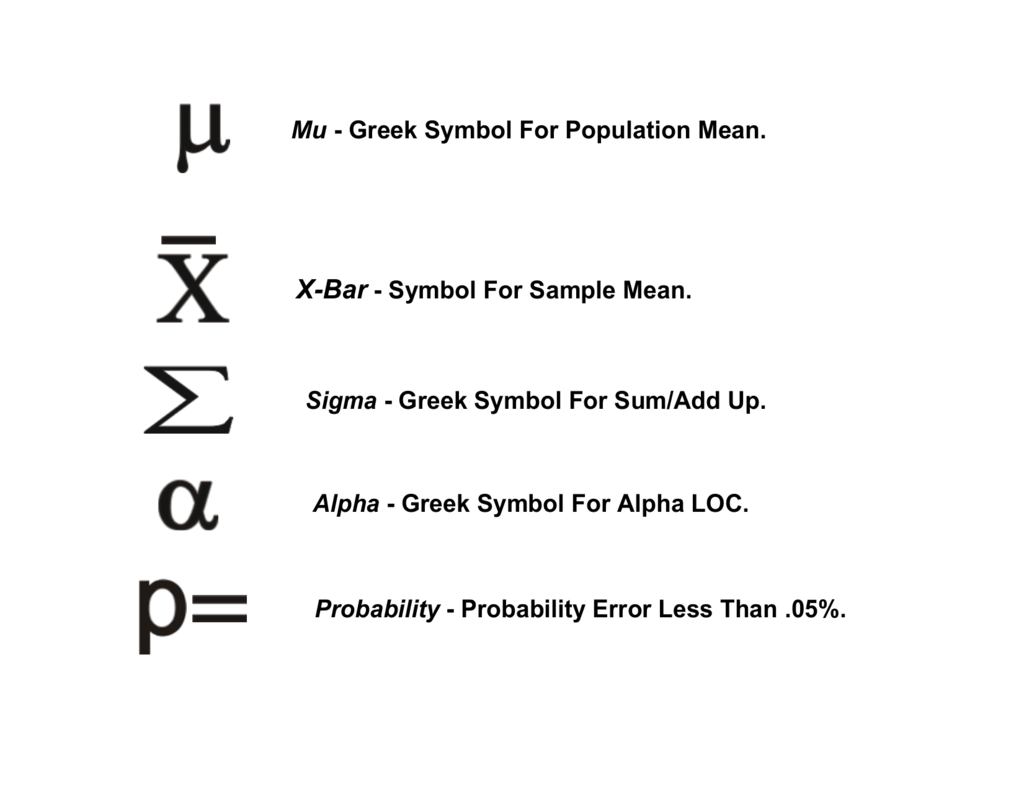

Simple linear regression is not a new concept has been around from the early 1800s. In the case of simple linear regression, our target values will be nominal values. Target variables are also referenced by various names, such as dependent variable, target variable or as a lower case y in algorithms. It can be either nominal or categorical value but is often represented numerically in both cases. Hours Studied (X)Įxample dataset showing features and target variables TargetĪ target is the value you’re trying to predict with a model. Specifically for simple linear regression all features must be numerical values.įeatures are reference by various names, independent variable, predictors, covariates and in algorithms often represented by a upper case X. In short, it can be either a nominal or categorical value. FeaturesĪ feature is an individual property or characteristic of the object we’re observing. But let’s break this down a little further, to get a better understanding. In this post I will cover how to implement simple linear regression in Python.Ī regression problem is one where you try and predict a target value given one or more features. One of the most basic machine learning techniques is simple linear regression.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed